E-News 2.0

High-Scale Personalized Media Ecosystem

Distributed Microservices for Media at 100k Concurrent Users

A distributed microservices architecture integrating Real-Time Live TV (HLS streaming), Algorithmic Personalization (deterministic ranking), and AI-Generated E-Newspapers, designed to handle breaking news traffic spikes without degradation.

The Problem: Traditional broadcast systems serve identical content to all users. Modern media demands personalization. But personalization algorithms are computationally heavy, and breaking news creates unpredictable traffic spikes. A typical monolithic web server crashes under the load.

The Solution: Service decomposition following Domain-Driven Design (DDD). Separate the concern of streaming (bandwidth-intensive, CDN-friendly) from feed generation (compute-intensive, cacheable) from personalization (latency-sensitive, algorithmic).

Key Innovation - Fault-Isolated Architecture: If the Personalization Engine slows down, Live TV keeps playing. If Live TV buffers, Article Feeds load instantly. Each service independently scales. Graceful degradation prevents cascading failures—critical for news media where 100% uptime is non-negotiable.

Production Metrics: Handles 100k+ concurrent connections, sub-50ms feed latency for cached requests, real-time WebSocket overlays for breaking news, deterministic ranking algorithm ensuring transparency.

Core Technologies

The Architectural Challenge

Live TV and Static News usually don't mix.

In traditional systems, a spike in Live TV viewers (e.g., during election results) crashes the API servers serving articles. Furthermore, "Personalization" is often just a buzzword for caching everyone's feed.

The Solution: Fault-Isolated Service Mesh. We decomposed the platform into distinct, isolated domains.

-

Feed Service: Stateless, read-heavy, aggressive caching.

-

Streaming Service: Bandwidth-heavy, offloaded to CDNs.

-

Personalization Brain: Compute-heavy, asynchronous ranking.

This means if the AI Ranking engine slows down, the Live Stream keeps playing. If the Live Stream buffers, the Article Feed still loads instantly.

1def compute_relevance_score(article, user_pref):

2 """

3 We don't use opaque 'black-box' AI for ranking.

4 We use a deterministic weighted formula to ensure explainability.

5 """

6 # 1. Category Alignment (Explicit Interest)

7 cat_score = user_pref["weights"].get(article["category"], 0)

8

9 # 2. Bias Proximity (Political Alignment Calculation)

10 bias_delta = abs(article["bias_val"] - user_pref["bias_val"])

11 bias_score = 1.0 - bias_delta # Closer = Higher Score

12

13 # 3. Recency Decay (News gets stale fast)

14 hours_old = (NOW - article["published_at"]).hours

15 time_score = 1.0 / (1.0 + 0.1 * hours_old)

16

17 # Final Composite Score

18 return (

19 0.40 * cat_score +

20 0.30 * bias_score +

21 0.30 * time_score

22 )Service Decomposition Strategy

We moved away from a Monolithic CMS to a Domain-Driven Design (DDD) approach.

- The Feed Service handles article retrieval. It doesn't calculate rankings; it just fetches data fast.

- The Personalization Engine is a separate microservice. It takes a User Vector and a Candidate Pool and returns a sorted list of IDs.

- The Streaming Service manages HLS manifests and CDN tokens, completely isolated from editorial content.

This separation prevents "Cascading Failures"—a critical requirement for news media where uptime is non-negotiable.

1routes:

2 - path: /feed/v1/*

3 service: feed-service

4 circuit_breaker:

5 max_failures: 5

6 timeout: 200ms

7 fallback: generic-trending-feed # Fail safe to non-personalized data

8

9 - path: /stream/live/*

10 service: streaming-service

11 caching:

12 enabled: true

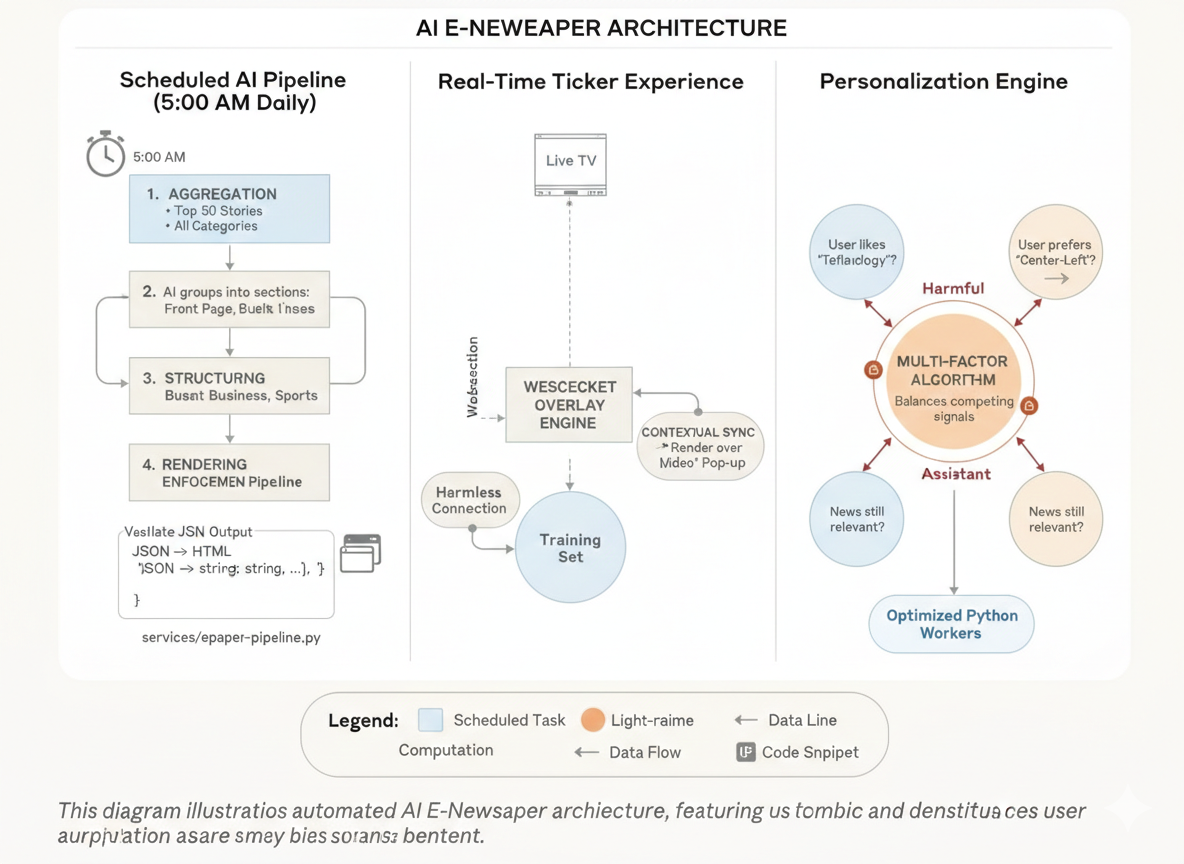

13 ttl: 5s # Live manifests need short TTLThe Personalization Engine

Personalization in news is tricky. If you only show what users like, you create an echo chamber. If you show random news, they disengage.

We implemented a Multi-Factor Ranking Algorithm that balances three competing signals:

-

Affinity: "Does the user like Technology?"

-

Alignment: "Does the user prefer Center-Left analysis?" (We model bias as a vector, not a filter).

-

Decay: "Is this news still relevant?"

We calculate these scores on-the-fly using highly optimized Python workers, ensuring that a user's feed updates the moment they read an article.

Real-Time Sync: The 'Ticker' Experience

Watching Live TV on the web often feels disconnected from the rest of the site. We wanted to replicate the "News Ticker" experience but make it interactive.

We built a WebSocket Overlay Engine.

- The backend pushes breaking headlines to connected clients via WebSockets.

- The frontend renders these headlines over the video player in real-time.

- Contextual Sync: If the news anchor starts talking about "Inflation," the system pushes a metadata tag that creates a "Read More" pop-up for a relevant article instantly.

1const socket = new WebSocket("wss://api.news.com/headlines");

2

3socket.onmessage = (event) => {

4 const data = JSON.parse(event.data);

5

6 // Update the visual ticker without reloading video

7 if (data.type === 'BREAKING_NEWS') {

8 renderOverlayTicker(data.headline);

9 }

10

11 // Sync related articles based on timestamp

12 if (data.type === 'CONTEXT_SYNC') {

13 showRelatedArticle(data.article_id);

14 }

15};The AI E-Newspaper Pipeline

While the feed is dynamic, many users still prefer the curated, finite experience of a morning newspaper. We automated this using a Scheduled AI Pipeline.

Every morning at 5:00 AM:

-

Aggregation: The system pulls the top 50 stories across all categories.

-

Structuring: An AI model groups them into sections (Front Page, Business, Sports).

-

Schema Enforcement: We don't trust the AI blindly. We validate the output against a strict JSON Schema to ensure every headline fits the layout.

-

Rendering: The JSON is converted into a high-fidelity HTML layout and snapshot into a PDF/Web Edition.

1def generate_daily_edition():

2 # 1. Get Candidates

3 articles = fetch_top_articles(limit=50)

4

5 # 2. Structure via AI

6 structure = ai_model.organize_layout(articles)

7

8 # 3. Guardrail: Validate Layout

9 if not validate_schema(structure, EPAPER_SCHEMA):

10 logger.error("AI generated malformed layout. Retrying...")

11 return retry_generation()

12

13 # 4. Render

14 return render_to_static_site(structure)Resilience & Fallback Strategies

In high-scale media, "Error 500" is not an option. We implemented defensive engineering patterns throughout the stack.

- Degradation: If the Personalization Engine is too slow (>200ms), the API automatically falls back to a pre-computed "Global Trending" feed. The user never sees a loading spinner.

- Circuit Breakers: If the Database gets overwhelmed, we stop sending write-requests (like 'mark as read') and queue them in Redis, prioritizing read-requests (loading articles) to keep the site alive.